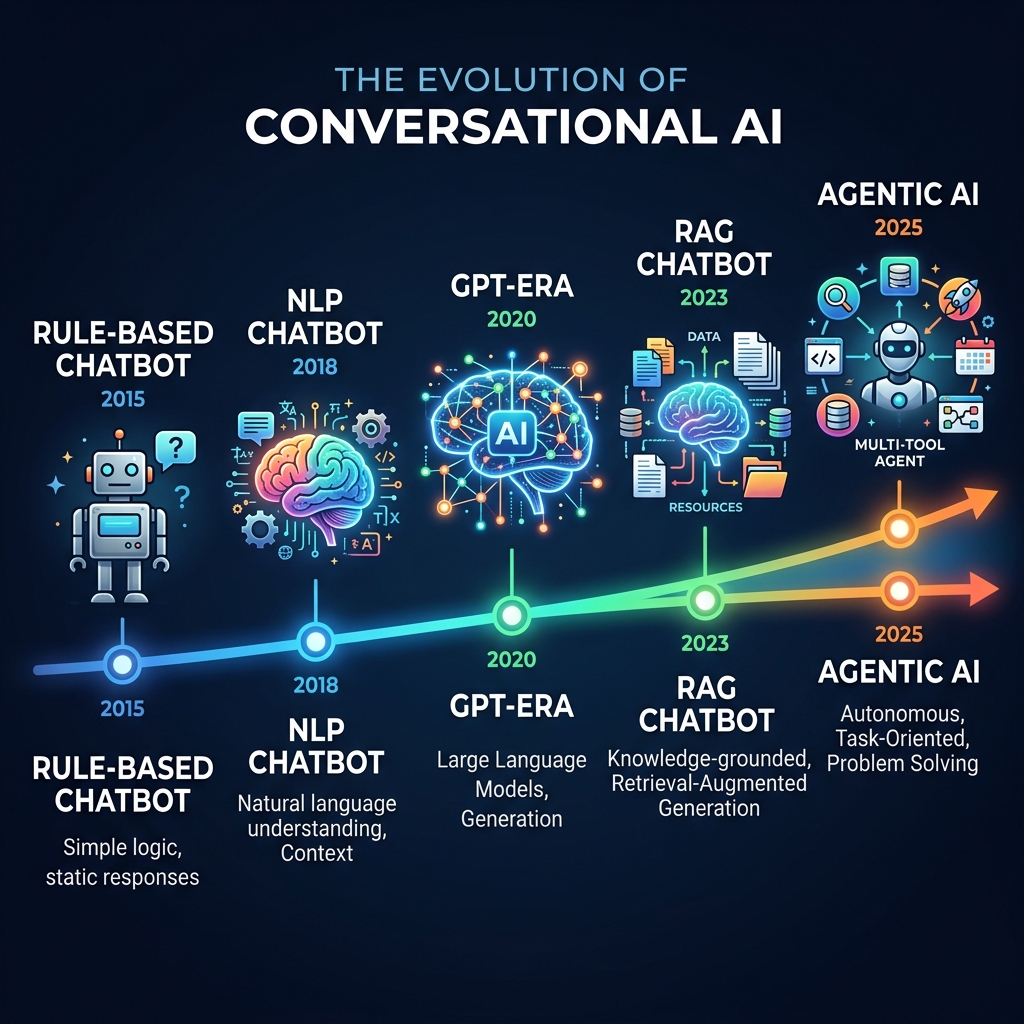

The evolution of conversational AI over the last few years has been nothing short of whiplash-inducing. We have gone from rigid phone trees to AI that can reason, remember, and act — and the shift happened in three distinct phases that most people lived through without realizing it.

Phase 1: The Decision Tree Era (Pre-2022)

If you have ever screamed "REPRESENTATIVE!" into a phone, you have experienced Phase 1 of conversational AI. This was the era of IVR menus and keyword-matching chatbots — systems that relied on you saying the exact magic word to route you down a pre-built path.

These systems were brittle. If you said "reschedule" instead of "change," you hit a dead end. If you combined two requests — "change my flight and add a bag" — the bot short-circuited. The technology worked only when the user adapted to the machine, not the other way around.

| Characteristic | Decision Tree Era |

|---|---|

| Input method | Exact keywords or button presses |

| Understanding | Pattern matching — "buy" ≠ "purchase" |

| Flexibility | Zero — one wrong word breaks the flow |

| User experience | "Press 1 for Sales, Press 2 for Support..." |

| Developer effort | Months of manually mapping every possible path |

Phase 1 chatbots didn't understand language. They matched strings. The user was doing all the cognitive work.

Phase 2: Intent Recognition (2018–2022)

Then came Natural Language Understanding (NLU). Platforms like Dialogflow, LUIS, and Rasa introduced intent classification — the AI could now recognize that "I want to buy a ticket" and "Get me a flight" meant the same thing.

This was a genuine improvement. Instead of matching exact words, the system learned to classify what the user wanted. But there was a catch: it still routed you down pre-programmed, rigid paths. The AI understood your intent but could only respond with the script someone had written for that intent.

Phase 3: The LLM Revolution (2023–2025)

Large Language Models blew the doors off the rigid scripts. GPT-4, Claude, Gemini — these models could understand deep context, handle interruptions, and generate fluid, human-like responses on the fly.

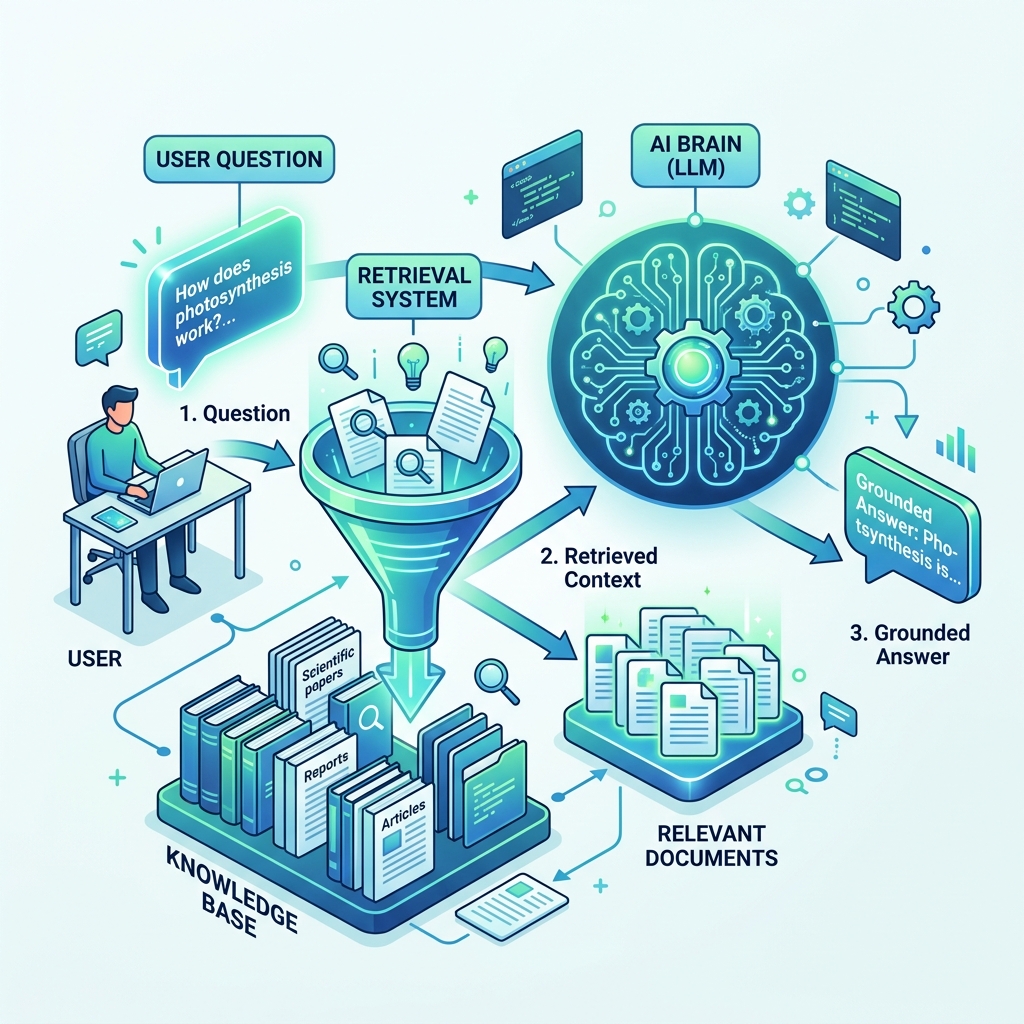

The fundamental shift: we moved from retrieving pre-written answers to dynamically synthesizing information. The AI didn't need someone to write a response for every possible question. It could read your documentation, understand it, and compose an answer in real time.

This was the phase that made AI chatbots actually useful for businesses. Instead of spending months scripting conversation flows, you could point the AI at your website and documentation, and it would start answering customer questions accurately within minutes.

The 3 Eras Side by Side

| Dimension | Decision Trees | Intent/NLU | LLM + RAG |

|---|---|---|---|

| Understanding | Keyword matching | Intent classification | Full language comprehension |

| Responses | Pre-written scripts | Pre-written per intent | Dynamically generated |

| Context handling | None | Within a single flow | Across entire conversation |

| Setup time | Months | Weeks | Minutes to hours |

| Coverage | Only mapped paths | Only trained intents | Anything in your content |

| Failure mode | "I didn't understand" | "I didn't understand" | Graceful fallback to human |

| Scaling content | Linear effort | Linear effort | Near-zero marginal cost |

Why This History Matters for Your Business

If you are evaluating AI chatbot solutions in 2026, this history gives you a critical filter: which era is the vendor still living in?

The talking part is solved. The question now is: what does the AI actually do with that conversation?

That question is what defines the next era — the shift to agentic AI, where the chatbot stops being a receptionist and starts being an autonomous project manager.

Related: Agentic AI: When Chatbots Start Doing | BM25 Search: The Algorithm Behind AI Chatbots | Query Expansion: Finding Better Answers