We have largely solved the "talking" part of conversational AI. The entire industry's focus right now is on turning the AI from a friendly receptionist into an autonomous project manager — one that doesn't just answer questions but actually executes workflows, remembers everything, and sees what you see.

The Shift: From "Chat" to "Agent"

As we move through 2026, the hype around just having a bot that "talks well" is fading. Every platform has an LLM now. The differentiator isn't language fluency — it's what happens after the conversation.

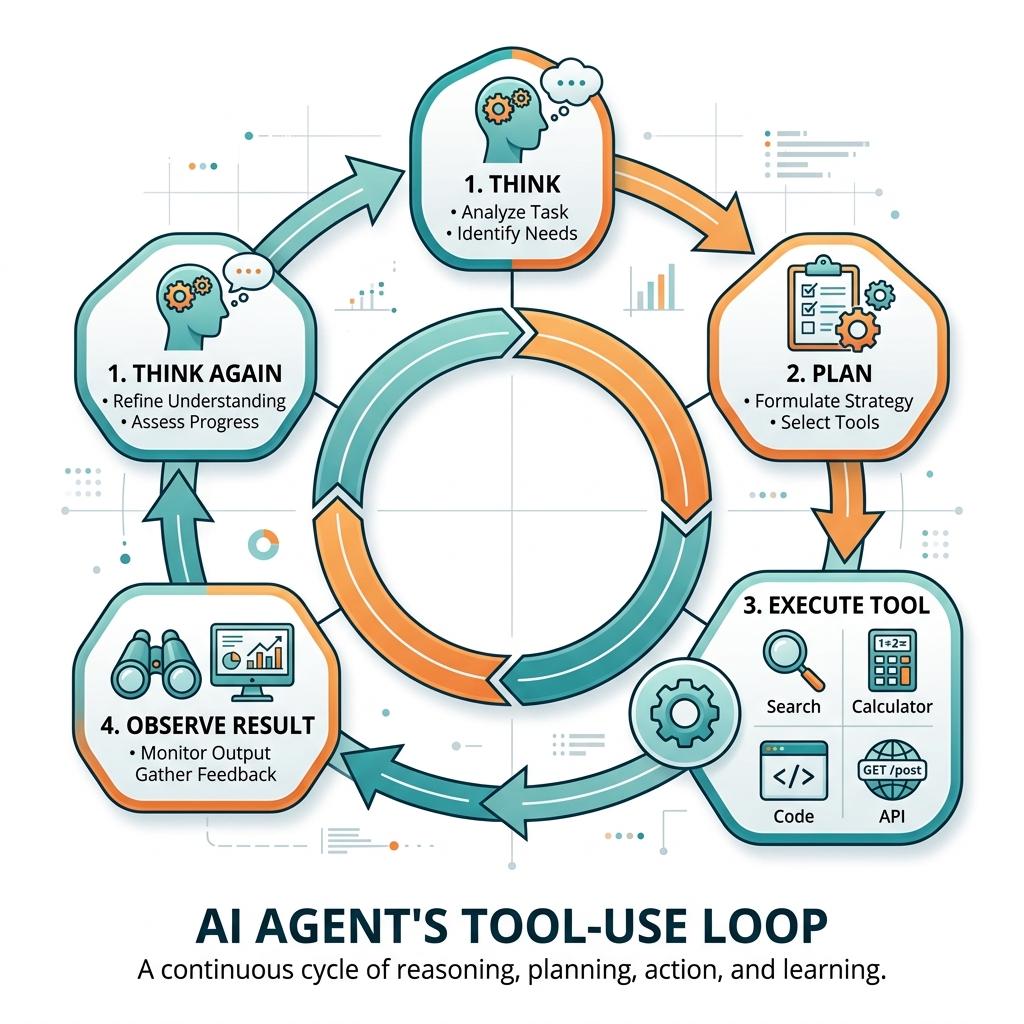

The word you will hear everywhere this year is agentic. An agentic AI doesn't wait to be asked questions. It takes action. It parses your request, decides what needs to happen, calls the right APIs, updates the right databases, and reports back — all without a human clicking buttons in between.

Conversational AI answers your questions. Agentic AI completes your tasks. The interface is the same — natural language. The outcome is fundamentally different.

Three Frontiers of Agentic AI

The shift to agentic AI is happening on three simultaneous fronts. Each one represents a massive change in how humans and AI systems collaborate.

1. Voice-to-Workflow Automation

We are stepping out of the browser window and into the physical world. The next generation of conversational AI is voice-driven — not as a novelty, but as a workflow automation layer for people whose hands are full.

Picture a field service technician standing in front of a broken HVAC unit on the third floor of a building. Their hands are holding tools. They can't type. But they can speak:

The AI doesn't just transcribe that. It parses the entities (HVAC unit, third floor, blown compressor), pings the inventory database to check stock, drafts the purchase order, and updates the maintenance schedule — turning unstructured voice directly into executed backend workflows.

| Industry | Voice Command | What the AI Executes |

|---|---|---|

| Field service | "Compressor is blown, order a replacement" | Checks inventory → creates PO → schedules install |

| Healthcare | "Patient in room 302 needs vitals rechecked at 3pm" | Updates nursing task board → sets alert → logs note |

| Warehousing | "Pallet B-17 is damaged, pull it from the pick line" | Updates WMS → flags QC → adjusts available inventory |

| Real estate | "Schedule a showing for 42 Oak St tomorrow at 2pm" | Checks agent calendar → sends buyer confirmation → updates MLS |

| Hospitality | "Room 415 checkout extended to 3pm, add late fee" | Updates PMS → adjusts housekeeping queue → applies charge |

The pattern is the same across every industry: unstructured natural language in, structured database operations out. The AI is the translation layer between how humans think and how systems work.

2. Persistent Memory — The AI "Brain"

Historically, AI has suffered from "Groundhog Day" syndrome — it forgets who you are the moment you close the window. Every conversation starts from scratch. You re-explain your context, your preferences, your history. Every. Single. Time.

The evolution right now is focused on persistent knowledge management. We are moving toward systems where the AI maintains its own internal, structured "wiki" of a client, a project, or a system.

This is closely related to what Andrej Karpathy calls the "LLM Wiki" pattern — the idea that AI systems should maintain their own structured, evolving knowledge bases rather than starting from raw documents every time.

3. Multimodal Collaboration

Conversational AI is no longer just text. It's no longer just voice. It is sight, too.

You can point a camera at a complex piece of hardware, or share your screen showing a messy spreadsheet, and have a fluid, real-time conversation with the AI about exactly what it is "seeing" in front of it.

This opens up entirely new use cases that were impossible with text-only AI:

- Visual diagnosis — Point camera at broken equipment, AI identifies the issue

- Document analysis — Share a contract or invoice, AI extracts and acts on the data

- Screen assistance — Share your screen, AI walks you through the software

- Quality inspection — Camera on a production line, AI flags defects in real time

- Spatial understanding — AR overlay showing AI annotations on physical objects

What This Means for the Industry

The companies that will win are not the ones with the best chatbot. They are the ones that connect the conversational layer directly to the database and the workflow engine.

| Era | The AI is... | Value to business |

|---|---|---|

| Phase 1-2 | A phone menu with a personality | Cost reduction (deflect calls) |

| Phase 3 | A knowledgeable receptionist | Better CX + faster resolution |

| Phase 4 (now) | An autonomous project manager | Revenue generation + operational efficiency |

Building Toward This Future

At GetGenius, we are building the foundation that makes agentic AI possible:

- Hybrid BM25 + semantic search for accurate retrieval

- Multi-query expansion for comprehensive coverage

- Knowledge Lint for training data quality

- Auto-synthesized knowledge for persistent intelligence

Accurate retrieval, clean data, and persistent knowledge are the prerequisites for AI that can act autonomously. You can't build a reliable agent on a foundation that gives wrong answers.

The future of customer support isn't a better chatbot. It's an AI teammate that resolves issues, not just discusses them.

Related: The 3 Eras of Conversational AI | Beyond RAG: Auto-Synthesized Knowledge | Dark AI Traffic: The Invisible Problem